How Scrapbook works

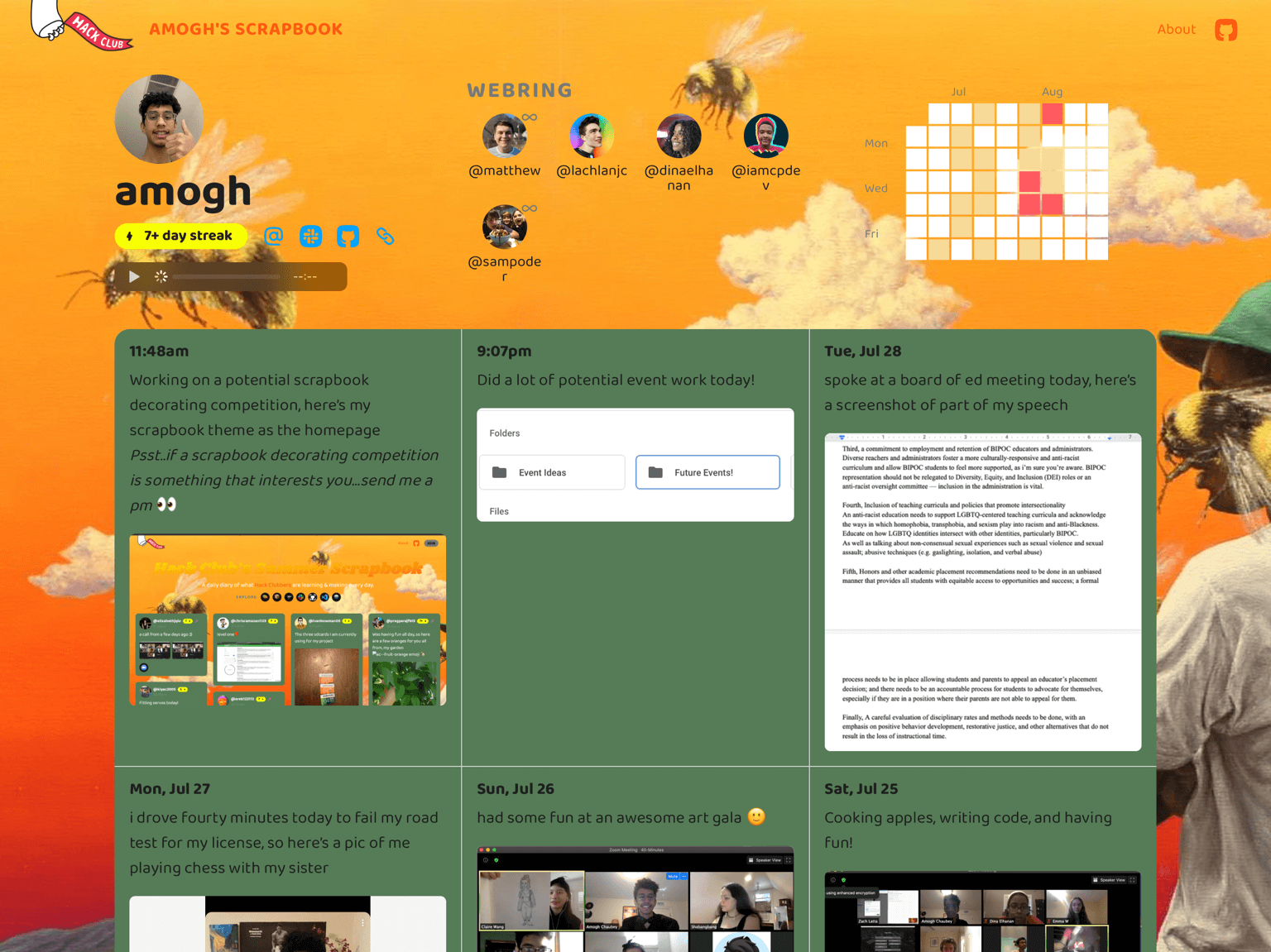

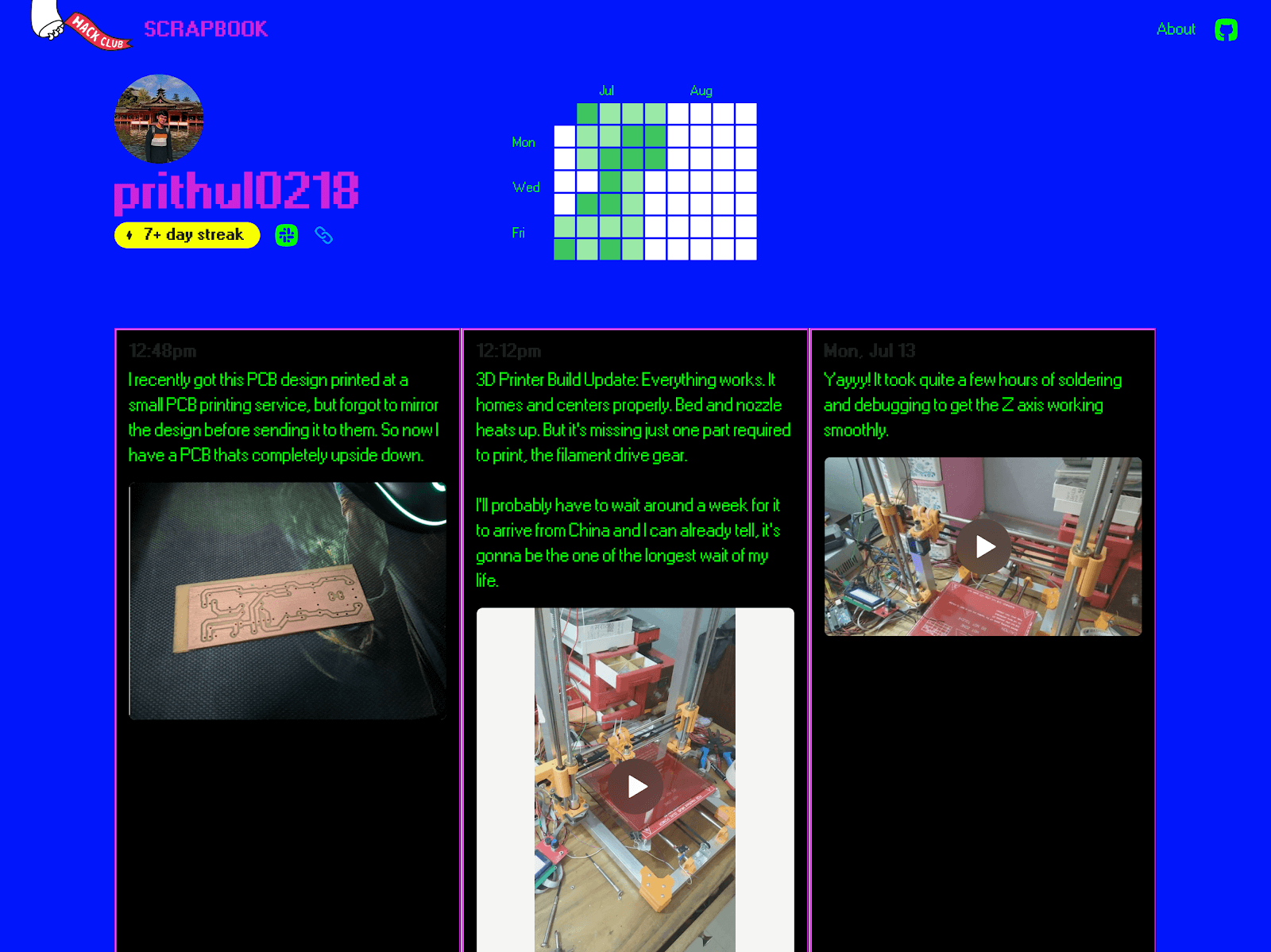

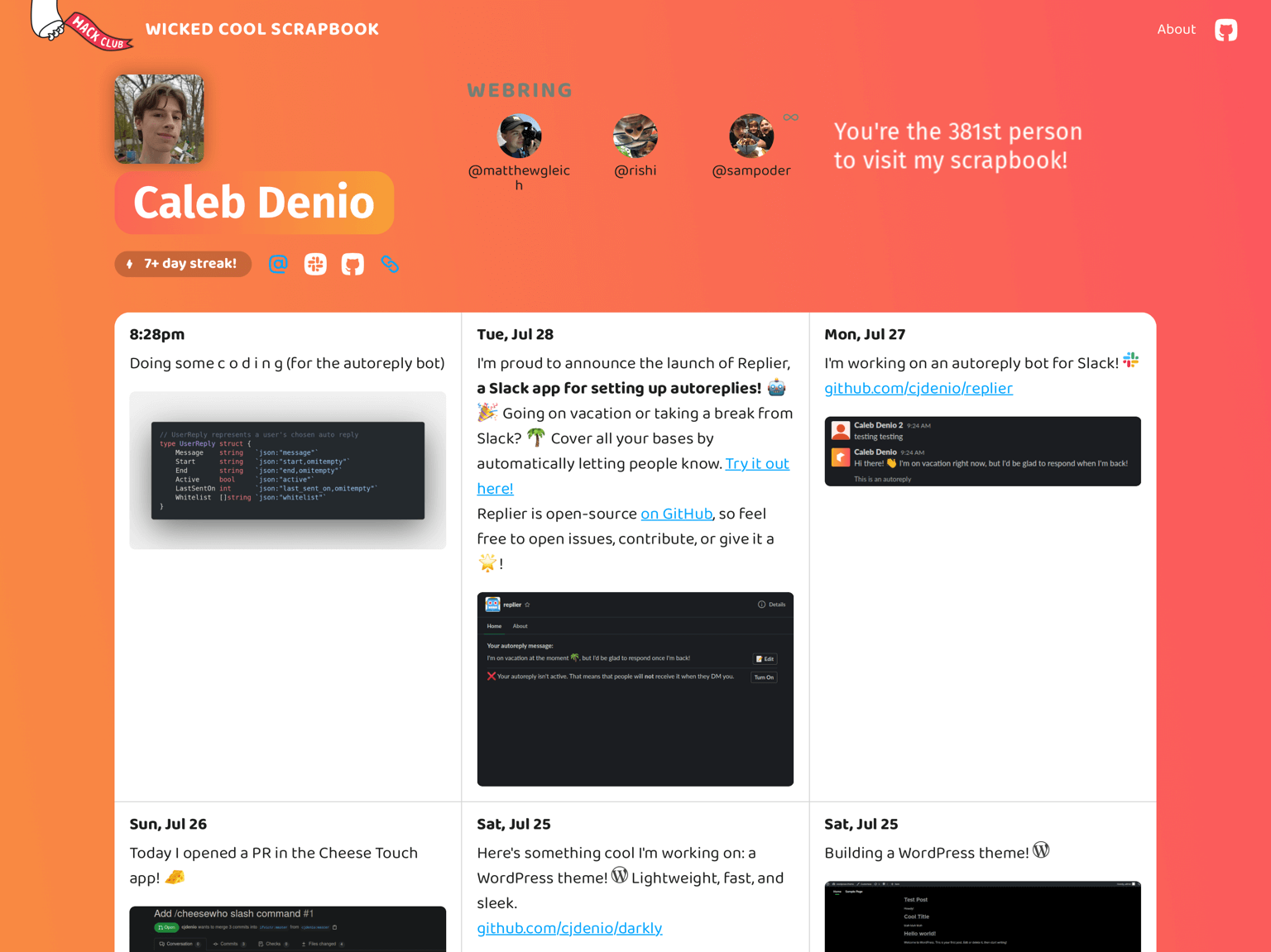

Throughout June & July, I’ve been designing & building the Hack Club Scrapbook, our primary Summer of Making initiative. The idea is to share updates of what you’re working on every day: every day this summer, Hack Clubbers are learning & building projects, sharing short video & photo updates via a Slack channel. Through a Slack bot, an Airtable, & a Next.js website, the public website makes everything browsable. 360 Hack Clubbers have posted 3,768 updates, with 7,910 unique emoji reactions already. It’s been an incredibly exhilarating project to build. Today I wanted to shed some light on exactly how it works.

Early this year, I wrote a manifesto for web services at Hack Club. Essentially: decentralized, easy to spin up or down, based on Next.js + Airtable. Looking back at building Scrapbook, it feels like a real validation of this philosophy. The site has been totally manageably to build & maintain, never gone down, and worked pretty consistently. At the same time, while we’re not shutting it down, if we wanted to, we could turn off the Scrappy bot & walk away. The site would continue working as it does now with no hosting cost or maintenance.

I’ve never seen a website at some scale use a setup quick like ours, so I wanted to write about how we built the site on JAMstack tech.

Overview

Behind the scenes, the site (all open source!) runs on Next.js, React.js, & SWR for data fetching. All pages are static-rendered, hosted on Vercel. Videos are hosted by Mux. The custom domains use a Vercel serverless function. The Slack integration runs on Express.js, hosted on Heroku. We built the initial version in just a week.

We don’t have a database in a traditional sense. All the data is stored in Airtable, fetched using our service airbridge, an API service for querying our Airtable projects as JSON. (On Airbridge, we can pass it an API key for Airtable to query anything in our account, or there’s a public list of tables & fields to expose without any authentication.)

Scrappy

Scrappy, the Slack bot, has been by far the most complex part of the experience & required the most maintenance. This is only natural—the website is in the end a glorified JSON printer, but Scrappy is trying to bring personality to a set of several dozen interaction points & edge cases.

Scrappy started as a set of API Routes on the frontend codebase, but we ran into a bunch of issues running a Slack bot as serverless on Vercel: namely a poor logging/debugging experience, in addition to slow response times. In a hasty technical transition, we migrated the functions into their own codebase, which is still a Next.js app but using a custom server, hosted on Heroku. Avoiding serverless ended up being way more productive for the team.

Scrappy is Matthew’s domain & developed nearly entirely by him.

Frontend

The frontend is a Next.js app, where every page is static-rendered. It stores no data itself and handles very little state.

Data fetching

The most interesting part of this story is data fetching, on both the backend and frontend. Every page on the site uses Next’s getStaticProps feature, which means you’re always getting a static page served to you from Vercel’s CDN, for every user.

The data used for every page is accessible over an API, documented here, which runs on Next’s API Routes with dynamic routing. (The API itself is merely a wrapper around Airbridge, making specific requests & restructuring the data in a consistent schema instead of the raw Airtable fields.) Because API Routes are merely functions, their functionality can be imported like any other JavaScript function. So each frontend page imports the function that powers its API counterpart, running it at build. It gives Next a revalidate: x option, which means it will generate statically at build time, but whenever that page gets hit, if it’s been more than x seconds, it will regenerate that page in the background and update its cache, but always serve the static page.

To further speed up the app, we don’t pre-render all pages on the site at build, or it’d take several minutes to build, which slows down the app. We pre-render only the top 75 most active users (by querying Airbridge for them), and the emoji reactions that are linked to on the homepage.

For pages not pre-rendered, such as when a new user starts using Scrapbook or a new emoji is added, we enabled the fallback: true option of getStaticPaths. When a path is visited for a user profile not yet rendered, it on-the-fly queries Airbridge & renders the profile page & caches it before serving to you. If you’ve seen “Loading…” on a profile page, the backend was generating a page for the profile & caching it.

On the frontend, you want to see posts in real-time as they’re added to Scrapbook, without reloading the page. For this, we use SWR, Vercel’s data fetching library. It polls the API endpoint for the page, on most pages using the same Feed component, which handles fetching the data every few seconds. The data is cached on the frontend. If the network disconnects, there’s data cached, so we can keep showing it, & SWR will retry fetching at exponentially slower intervals. If it reconnects, no page reload is needed, it can download new data, re-render, and cache it on the frontend for the future. This is all the built-in behavior of SWR, nothing custom, which is wild.

To summarize: The page you’re served initially is static-rendered with all the data, & pretty much up-to-date, so you’re getting an instant response cached on a CDN. The component tree renders with the initial data passed down as props. Then SWR caches it on the frontend for good measure. Every few seconds, SWR refetches the data from an API function, which is the same function that ran at page build, to re-render on the client side. All of the pages of Scrapbook work like this.

Images & video

Images I think are still not an ideal experience on Scrapbook, but making them load quickly & fluidly was critical. After several packages & different techniques over time, I settled on rolling my own component, inspired by this example project’s, using intersection observers.

Video, somehow, was far simpler to integrate thanks to Mux Video. Serving video files directly from Airtable initially was super slow to load, so we instead upload videos directly to Mux on the backend, and they essentially livestream the footage directly into the <video> tag, without any proprietary libraries. On the frontend, using their Next.js example, I made a component with live thumbnails that plays videos on hover. Mux even wrote a case study about the integration with Scrapbook! Highly recommend Mux for a video-intensive project, especially with user-uploaded content.

CSS

CSS was an interesting part of this. Most of Hack Club’s websites use Theme UI & the Hack Club Theme, and our early prototype did. But to make CSS customization easy for beginners meant the HTML on profile pages had to be super readable, and Theme UI/Emotion creates randomly-named classes. So I switched to using an app-wide imported stylesheet for the basic theme, which worked seamlessly. For custom themes, it’s super simple—we add a <link> tag pointed to your CSS file. On pages that don’t allow customization, I lightly use styled-jsx. To share colors & fonts between the stylesheet, styled-jsx, & custom theme files, we use CSS Custom Properties. That’s it on CSS.

Analytics

Like other sites at Hack Club, we don’t use creepy tracking or analytics. We only use Fathom Analytics, which are public here, integrated following this guide.

Custom domains

Enabling/setup

Behind the scenes, when you invoke the Slack slash command to add a custom domain, it hits a route on Scrappy. That function:

- Sets the domain on your user record in Airtable

- Uses the Vercel API to add a custom domain to the project

- If you’ve previously set up a custom domain, uses the Vercel API to remove it from Vercel

Serving the site

The profile pages run in the Next.js app, where they’re statically rendered for performance. We want people to point their domains to their profiles, but you can’t CNAME to a specific path, so we need to serve the custom domain functionality at the root path. This would be doable with getServerSideProps in Next (checking the host header & serving the appropriate page), but it’d mean a performance hit (running a function instead of serving a static file) for every visit to the homepage, which we want to avoid. Instead, in a separate project we host a single serverless function, source code here, with an HTTP rewrite at the root to its path. When that site is accessed, we:

- Get the

hostheader of your request - Find the user by that domain in our Airtable database, accessed over Airbridge

- Fetch the HTML of the profile page from the live site

- Serve that HTML directly

This means social cards, etc still work as expected, because while slower than accessing the main Scrapbook domain, the custom domain serves the profile as a regular HTML page, even though it doesn’t generate any of that HTML or JS in the function. It also means we don’t have to update or test custom domain functionality on every change, since if it works on Scrapbook, it works on custom domains.

I’ve never seen another website at some scale using Airtable for the entire backend & a collection of serverless functions & a fully static frontend, but this tech stack has been kind of a dream to work with. It’s impossible to introduce security issues, performance tuning has basically meant fetching less data per request, & the frontend I find to feel incredibly alive. Check out the code, & I’m happy to expand on any topics.